Using GA Attribution Reports to Optimize Ad Spend

Over the past couple of months, I have spoken several times to a variety of audiences about Attribution, specifically about using the Attribution reports in Google Analytics. It’s a topic that doesn’t get a lot of spotlight even though the insights and findings can help you to significantly influence Return on Ad Spend (ROAS hereafter) for integrated ad campaigns from DoubleClick and AdWords.

You might be questioning me when I say this topic ‘doesn’t get a lot of spotlight’, because yes, Attribution is a major buzzword and if you’ve been at any analytics conference in the past 5 years you’re sure to have had your fill of hearing it. What I mean though, is that we don’t often hear much about actually using these reports, from an analyst’s perspective. So that’s what I’m going to share here.

The biggest insight that you can get from using attribution reports is that you can determine the most effective marketing channels for investment. Alternatively, you can also determine the least effective channels and take action to shift your budgets away from them.

Attribution reports in Google Analytics are found in the Conversion section in the left-hand nav bar. I will talk about two of them here: the Model Comparison Report, and the ROI Analysis report.

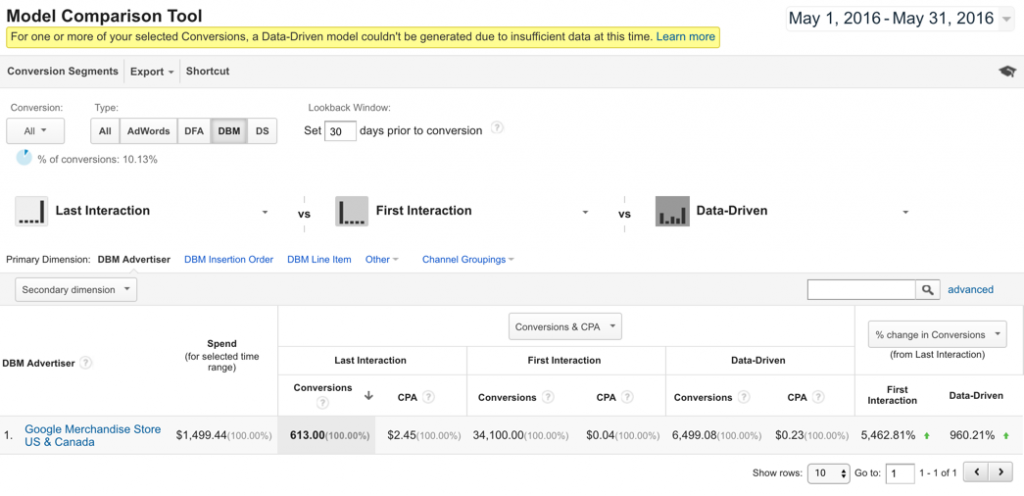

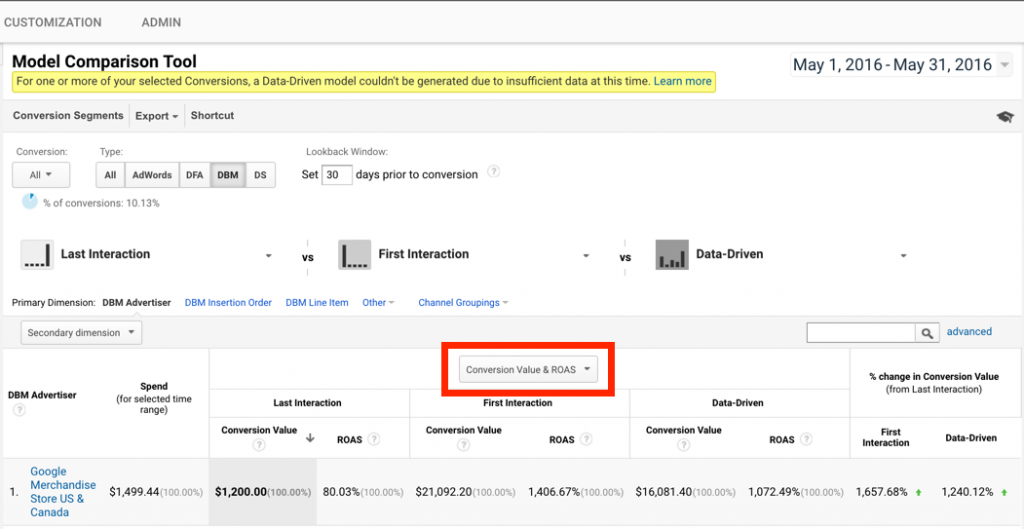

Above you can see two screenshots. The first is looking at the data by Conversions & CPA (this is the default toggle position) while the second has changed the toggle in that box to Conversion Value and ROAS (Return on Ad Spend).

Typically, analytics attributes conversions based on a last-click, or last interaction model. In this case, That would mean that this DMB campaign for the Google Merchandise Store has driven conversions valued at $1200 and ROAS of 80% (from second screen shot) with a Cost-per-acquisition (CPA) of $2.45 (from first screen shot). That looks pretty bad when we compare it to the spend of nearly $1500 for this campaign.

When we look at this campaign on a first interaction model, the story is much different. The conversion value of this campaign is $21,092.20 with a ROAS of 1,406% and a CPA of $0.04 when analyzed on a first interaction model. If we just looked at this campaign based on first interaction, it looks like it’s performing great! If I was a media agency running this campaign for a business, I’d want to just report back based on the first interaction data (a reason why you should always ensure the metrics your agency is giving you reflect the full picture, not just their best interests).

The truth of how impactful this one ad campaign is is somewhere in between what we see for the first and last interaction models. That’s where the Data-Driven model comes in. The Data-Driven model takes multiple touch points into account (up to 4), and weights them based on an algorithm run in the background to determine the relative impact of this campaign on the overall conversions based on the other potential touchpoints analyzed. It gives us a conversion value of about $16,081.40 with a ROAS of 1,072% and a CPA of $0.23, which seems much more realistic than either of the other two models.

The last column in this report analyzes the % change in conversions (or conversion value) based on the first model analyzed. When we compare the first interaction to the last interaction, we see that the first interaction performs nearly 5500% better than the last interaction model. As an analyst, I have a few thoughts on this:

1. This campaign seems to work quite well as a top of funnel campaign. If this goal of my campaign is awareness, then it seems to be accomplishing that goal with a nice follow through to conversion.

2. I should not target anyone who has previously visited my site, or use this as conversion based campaign, because this campaign doesn’t work well as a last interaction campaign. It would be a waste of ad dollars and would drive down my ROAS.

3. Based on the goal of this campaign, I may want to put more money towards it (if it is an awareness campaign), or stop it all together (if it was a conversion campaign).

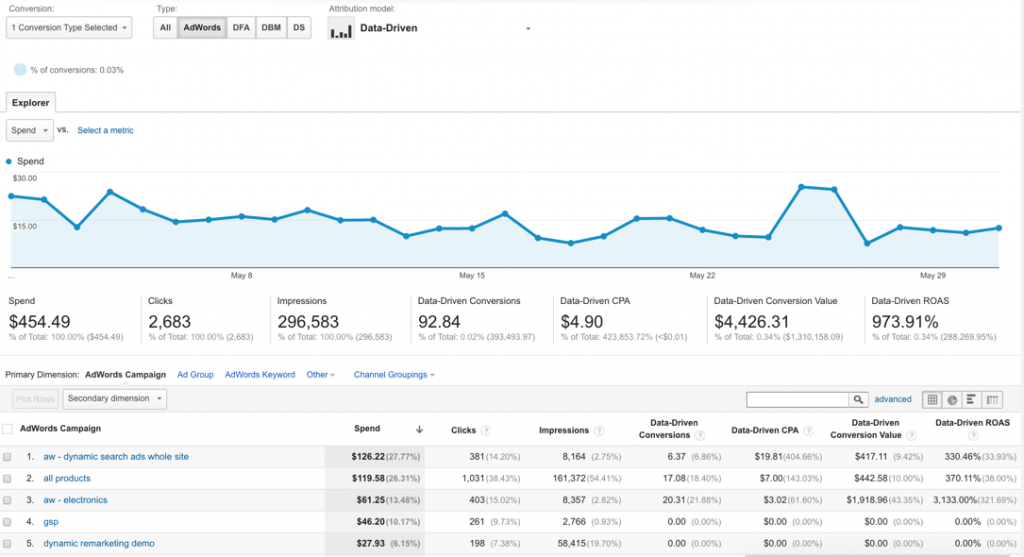

The second report I want to highlight here is the ROI Analysis report.

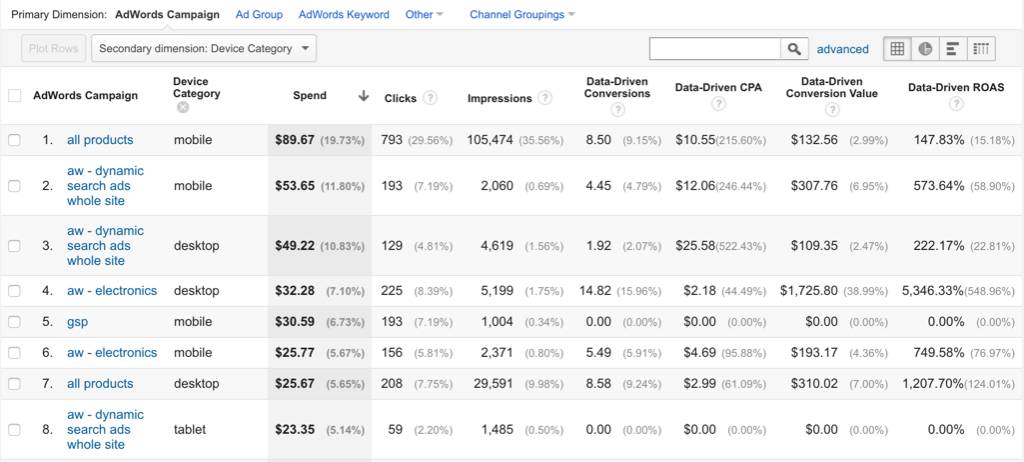

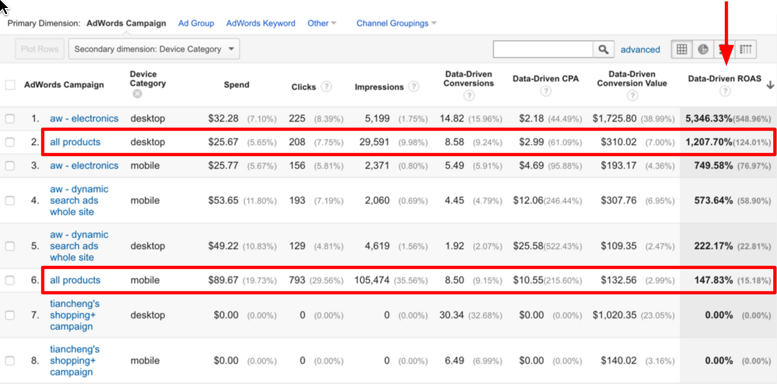

In this case, we are looking at an AdWords campaign for the Google Merchandise Store based on a Data-driven Attribution model. You can see AdWords metrics such as Spend, Clicks, and Impressions here within the GA interface. One of the biggest benefits of integrating your Search & Display efforts with Google Analytics is to be able to slice and dice your Ad data by your GA data and your GA data by your Ad data. To highlight this, I’m going to add a secondary dimension of Device Category to this report to break down this AdWords campaign by the type of device someone interacted with this ad from.

When you run a table report in Google Analytics, it will by default be sorted by the first metric column, which in this case would be the Spend column. I don’t find this all that interesting for this report, so I’m going to resort by the Data-Driven ROAS column instead. Now I start to see some interesting things.

As you can see, the ‘all products’ campaign, when served on desktop, has a very high Data-Driven ROAS at ~1200%. This campaign seems to perform quite well on desktop. However, when we look at the same campaign when served on mobile, it performs significantly worse with only a 147% Data-Driven ROAS. When we look at the clicks and impressions for each, we can see that the campaign on mobile is actually driving a lot more clicks/impressions than on desktop, but they aren’t converting. Why such a huge difference?

As an analyst, a few potential scenarios could answer this question:

1. The mobile path to conversion could be broken (actually broken, or the UX could be so bad that very few people persist on mobile). To confirm this scenario, I could look at a fallout funnel or analyze page path/event paths to see if users are actually trying to convert but are dropping off at a particular step/screen

2. Perhaps the ad is a compelling ad for discovery (product research), but users aren’t ready to buy yet without doing more extensive research on a desktop device. I could confirm this scenario is we were using a User-ID view with a Multi-channel funnel report.

Depending on the answers to the above questions, there are a few different actions I could take:

1. If the mobile UX is broken, I should make sure a fix is put in place asap since this campaign seems to be driving a lot of traffic via mobile and we are missing out on a large amount of conversions.

2. If the mobile UX isn’t broken, and customers are discovering via mobile and coming back to purchase via desktop, I should probably keep the campaign going as is, since it’s working well as an awareness campaign on mobile and I’m reaping the benefits down the funnel.

3. If the mobile UX is fine, but users aren’t coming back via desktop and instead just abandoning from the mobile conversion path, I should consider turning this campaign off for mobile, because the awareness it is driving isn’t turning into revenue down the road.

As you can see, real insights into the effectiveness of marketing budgets can be gained by analyzing the Attribution reports available in Google Analytics, and those insights can and should lead to action in terms of shifting budgets towards your most profitable channels.

Peter

I’m an digital analyst. This is an awesome article! Thanks so much for sharing.

Dante

Thanks for sharing. What was the outcome? Was the mobile UX broken?

Bogdan

I don’t see the “Data-driven” model in my Analytics accounts, is this a new feature or how can I activate it?

Krista

Hi there,

Data-driven models are part of GA360. You must have a 360 enabled account, and then under Admin –> View Settings you can toggle the ‘Enable Data-driven models’ switch to on.

Best,

K